The verdict: Meta is handing content enforcement to AI, and if your app touches Facebook or Instagram — your users are now subject to automated decisions made by a system you have zero insight into.

This week Meta announced a wide rollout of its AI support assistant across Facebook and Instagram, with a direct statement that it will “reduce our reliance on third-party vendors” for content moderation. Translation: the humans reviewing borderline content are being replaced by models. That’s a massive shift. And builders who ship on Meta’s platforms need to understand what it actually means.

What Meta Actually Said

Meta’s announcement frames this as a win for users — faster support, fewer delays, better detection of “repetitive reviews of graphic content” and adversarial actors pushing drugs and scams. Here’s the actual quote from their engineering blog:

“While we’ll still have people who review content, these systems will be able to take on work that’s better-suited to technology, like repetitive reviews of graphic content or areas where adversarial actors are constantly changing their tactics, such as with illicit drugs sales or scams.”

That’s carefully worded. “We’ll still have people” is doing a lot of heavy lifting in that sentence.

What they’re describing isn’t a hybrid system where humans stay in the loop for edge cases. It’s a handover where AI handles the volume — and the volume is everything. The humans left will be handling escalations and appeals, not primary review. The first decision on your content will be made by a model.

The History They’d Rather You Forget

This isn’t Meta’s first attempt at AI moderation — and the track record is not great.

In 2020, Facebook’s AI systems over-removed content at scale during a bug, taking down legitimate posts about Black Lives Matter protests. In 2021, the platform’s AI labelled a video of Black men as “primates” — a high-profile failure that led to a public apology and internal review.

The pattern: impressive in demos, unreliable in production, harmful to marginalised communities at disproportionate rates. AI moderation systems consistently perform worse on non-English content, LGBTQ+ topics, and minority languages, often because training data skews toward majority populations.

Meta knows this. They’ve had internal reports, external audits, and public controversies documenting it for years. The decision to proceed with AI-first moderation isn’t based on the AI being ready. It’s based on the cost calculation that human moderation at scale is expensive and AI is cheap.

What This Means If You’re Building on Meta’s Platforms

If your app integrates with Facebook or Instagram — a social login, posting on behalf of users, a business account integration — this changes your risk profile.

1. Your users can be actioned without human review.

A post your app publishes on behalf of a user can be removed, restricted, or flagged by a model making a first-pass decision. There’s no human in that loop anymore. If the model is wrong — and it will be wrong at the rate of any model applied at Meta’s scale — there’s no escalation path that happens in a useful timeframe.

2. Appeal processes will get slower, not faster.

Here’s the counterintuitive consequence: when AI handles primary review at scale, the human appeals queue grows. You get more automated first decisions, which generates more appeals, which a smaller human team has to process. This is already happening on YouTube where AI-first enforcement created a multi-week appeal backlog. Expect the same at Meta.

3. The rules are less legible than ever.

Human moderators apply policy with judgment. AI models apply pattern matching with statistical confidence thresholds. The behaviours that get your content actioned will become harder to predict, not easier. You can read Meta’s Community Standards — but the model’s decision boundary is a black box even to Meta’s own engineers.

The Practical Checklist for Builders

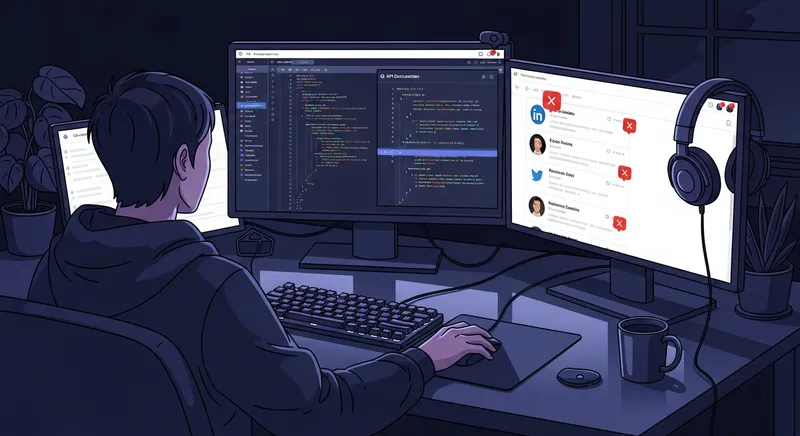

If you’re building on Meta’s Graph API or integrating with their platforms, here’s what to do now:

Audit your content publishing flows. Any place your app publishes content on behalf of users is now higher-risk. Review what you’re posting. Run it through your own content checks before it hits Meta’s systems. Don’t rely on Meta’s moderation as a safety net — it’s now an enforcement mechanism, not a safety net.

Build your own appeals documentation. When your users get actioned, they’ll need clear records of what was posted, when, and why. Your app should log this. Meta’s appeal process requires evidence — make sure your users have it.

Diversify your social integrations. If your app’s core loop depends on Meta platform access, that’s a concentration risk that just got higher. This is a good time to build out alternatives — LinkedIn, Bluesky API, or direct email/newsletter flows that don’t depend on Meta’s enforcement decisions.

Monitor your account standing actively. Meta’s Account Quality tool shows your business account status. Check it more often. AI enforcement acts faster than human enforcement — issues that would’ve taken days to escalate now surface in hours.

The Bigger Picture

Meta is one company. But they’re the company that owns the two largest social platforms in the West and the largest messaging platform globally. When they move to AI-first content moderation, they’re setting a precedent the rest of the industry will follow.

TikTok’s parent ByteDance already runs AI-first moderation. YouTube has been pushing in this direction for three years. Twitter/X moved this way aggressively after the 2022 layoffs. The human moderation era is ending — not because AI is ready, but because it’s cheaper and the platforms have decided that’s good enough.

The cost will be paid by users whose content gets wrongly actioned, by communities whose context the models were never trained to understand, and by builders whose apps get caught in enforcement sweeps they have no visibility into.

Understanding the system you’re building on isn’t optional. It’s how you ship something that survives.

The verdict: Meta’s AI moderation rollout is a cost-cutting decision dressed as a product improvement. For builders, the practical response is simple: don’t rely on Meta’s systems as a safety net, document everything your app publishes, and start building platform diversification into your roadmap. The AI is making the first call on your users’ content now — act accordingly.